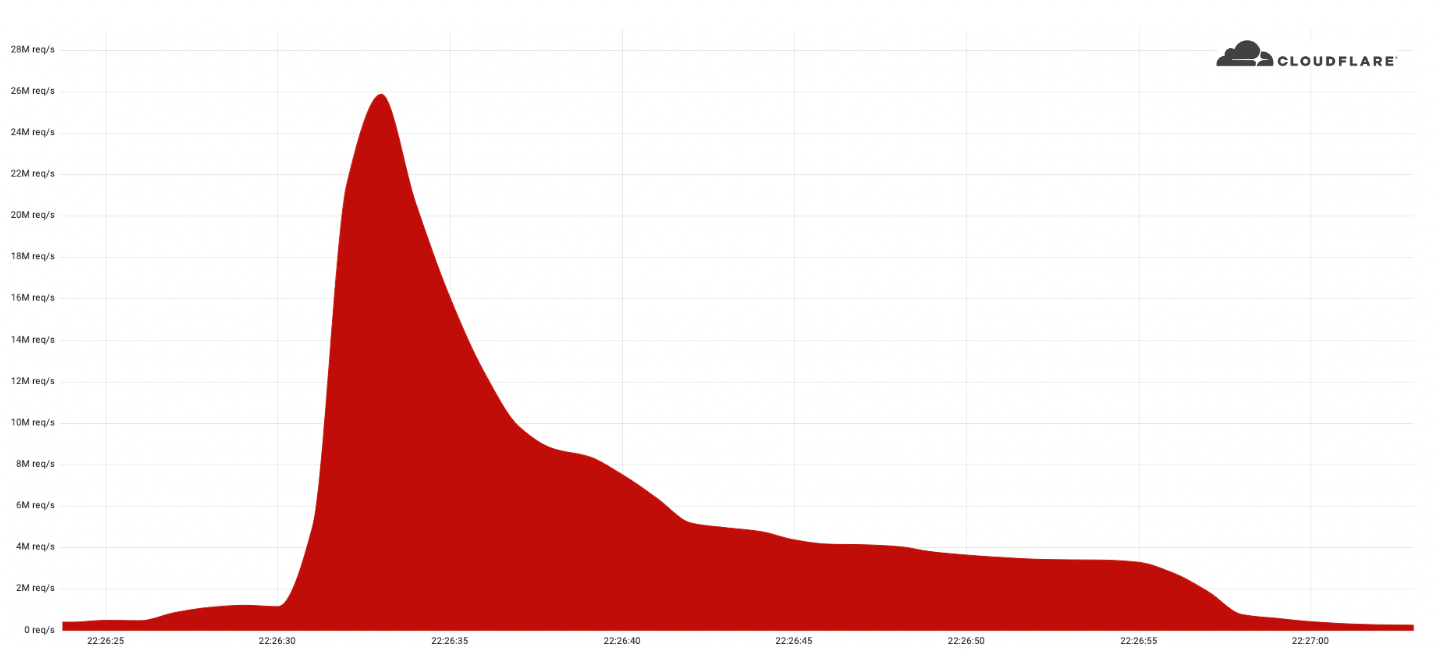

In June 2022, we reported on the largest HTTPS DDoS attack that we’ve ever mitigated — a 26 million request per second attack – the largest attack on record. Our systems automatically detected and mitigated this attack and many more. Since then, we have been tracking this botnet, which we’ve called “Mantis”, and the attacks it has launched against almost a thousand Cloudflare customers.

Cloudflare WAF/CDN customers are protected against HTTP DDoS attacks including Mantis attacks. Please refer to the bottom of this blog for additional guidance on how to best protect your Internet properties against DDoS attacks.

Have you met Mantis?

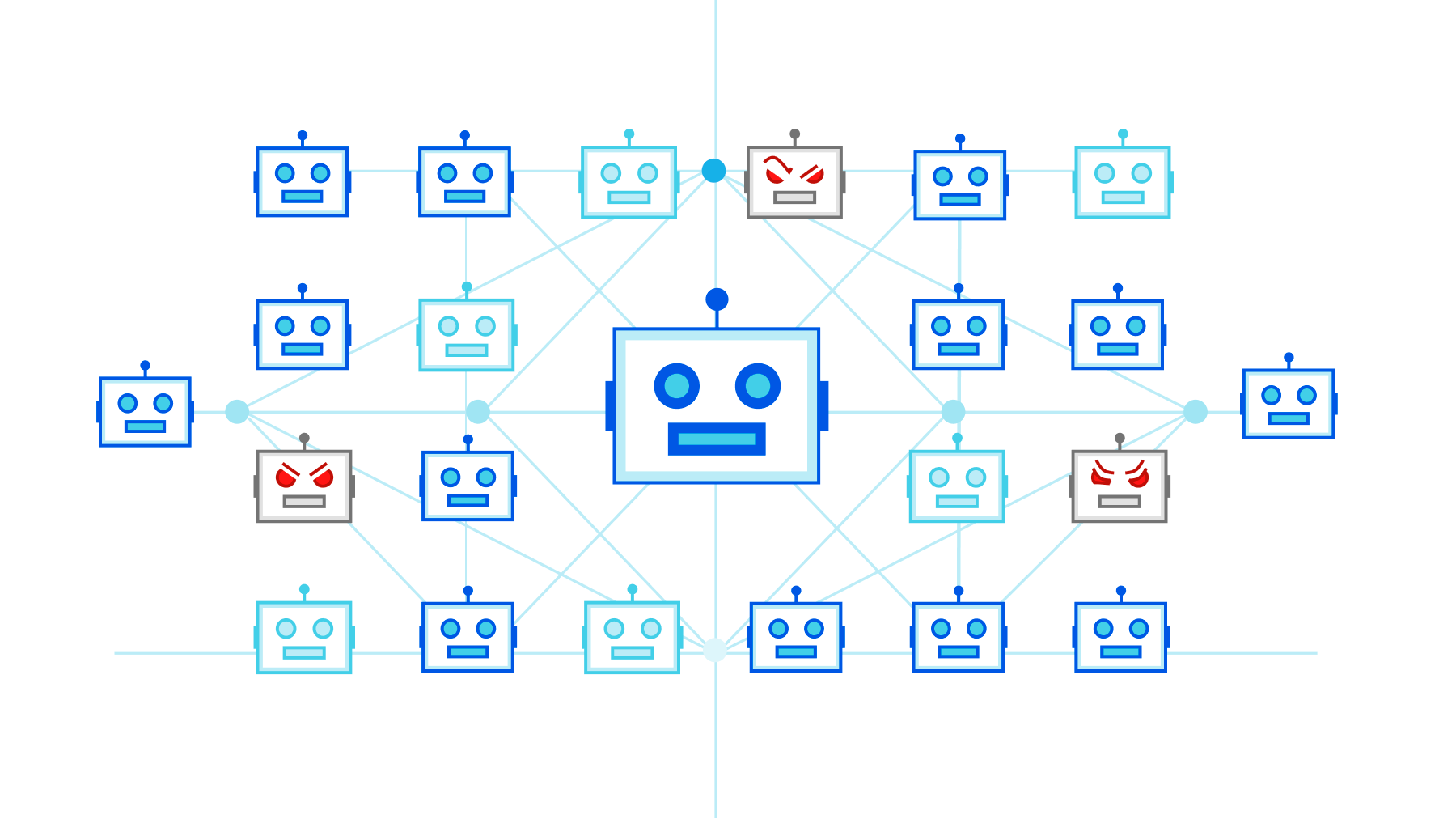

We named the botnet that launched the 26M rps (requests per second) DDoS attack “Mantis” as it is also like the Mantis shrimp, small but very powerful. Mantis shrimps, also known as “thumb-splitters”, are very small; less than 10 cm in length, but their claws are so powerful that they can generate a shock wave with a force of 1,500 Newtons at speeds of 83 km/h from a standing start. Similarly, the Mantis botnet operates a small fleet of approximately 5,000 bots, but with them can generate a massive force — responsible for the largest HTTP DDoS attacks we have ever observed.

The Mantis botnet was able to generate the 26M HTTPS requests per second attack using only 5,000 bots. I’ll repeat that: 26 million HTTPS requests per second using only 5,000 bots. That’s an average of 5,200 HTTPS rps per bot. Generating 26M HTTP requests is hard enough to do without the extra overhead of establishing a secure connection, but Mantis did it over HTTPS. HTTPS DDoS attacks are more expensive in terms of required computational resources because of the higher cost of establishing a secure TLS encrypted connection. This stands out and highlights the unique strength behind this botnet.

As opposed to “traditional” botnets that are formed of Internet of Things (IoT) devices such as DVRs, CC cameras, or smoke detectors, Mantis uses hijacked virtual machines and powerful servers. This means that each bot has a lot more computational resources — resulting in this combined thumb-splitting strength.

Mantis is the next evolution of the Meris botnet. The Meris botnet relied on MikroTik devices, but Mantis has branched out to include a variety of VM platforms and supports running various HTTP proxies to launch attacks. The name Mantis was chosen to be similar to “Meris” to reflect its origin, and also because this evolution hits hard and fast. Over the past few weeks, Mantis has been especially active directing its strengths towards almost 1,000 Cloudflare customers.

Who is Mantis attacking?

In our recent DDoS attack trends report, we talked about the increasing number of HTTP DDoS attacks. In the past quarter, HTTP DDoS attacks increased by 72%, and Mantis has surely contributed to that growth. Over the past month, Mantis has launched over 3,000 HTTP DDoS attacks against Cloudflare customers.

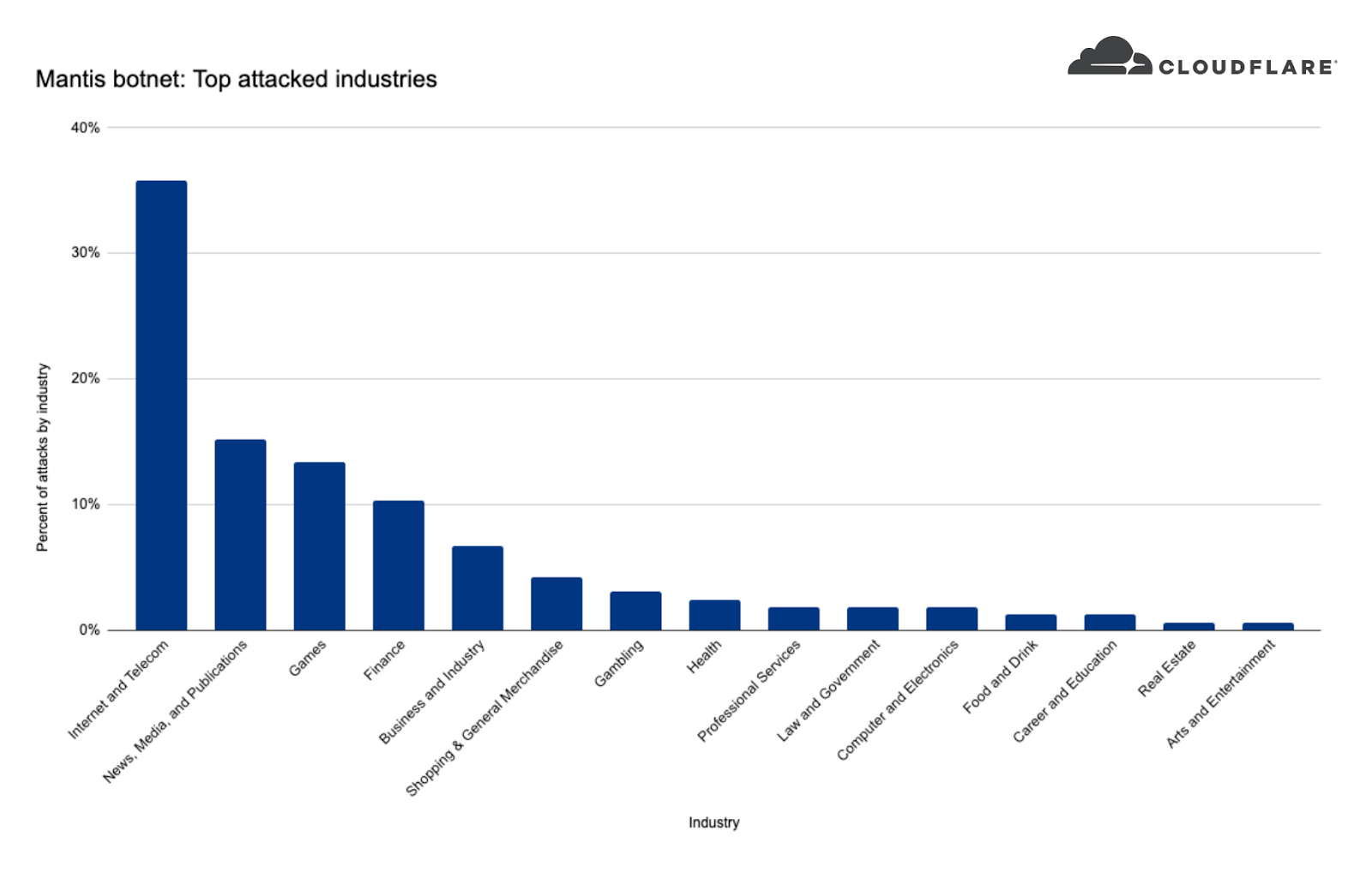

When we take a look at Mantis’ targets we can see that the top attacked industry was the Internet & Telecommunications industry with 36% of attack share. In second place, the News, Media & Publishing industry, followed by Gaming and Finance.

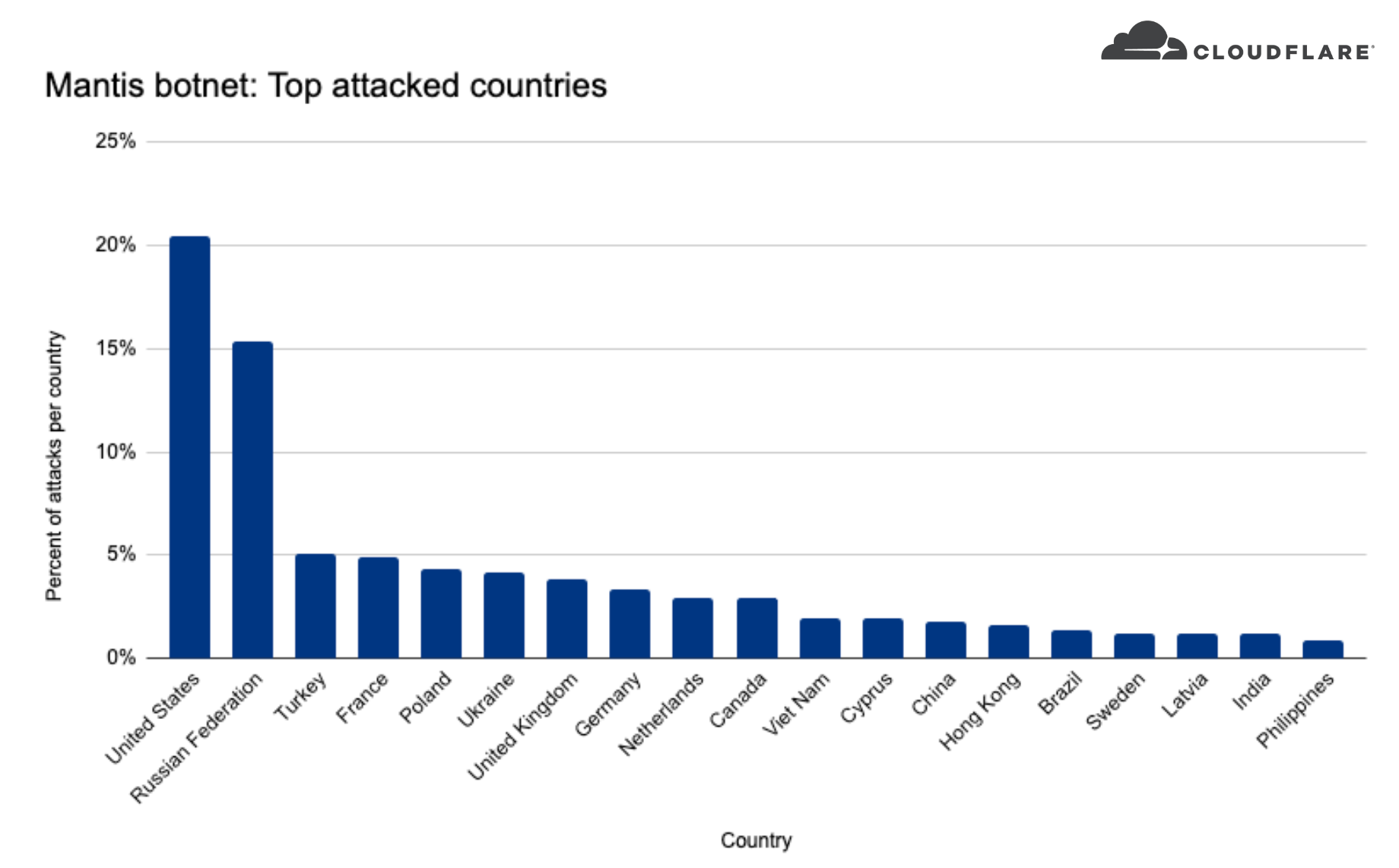

When we look at where these companies are located, we can see that over 20% of the DDoS attacks targeted US-based companies, over 15% Russia-based companies, and less than five percent included Turkey, France, Poland, Ukraine, and more.

How to protect against Mantis and other DDoS attacks

Cloudflare’s automated DDoS protection system leverages dynamic fingerprinting to detect and mitigate DDoS attacks. The system is exposed to customers as the HTTP DDoS Managed Ruleset. The ruleset is enabled and applying mitigation actions by default, so if you haven’t made any changes, there is no action for you to take — you are protected. You can also review our guides Best Practices: DoS preventive measures and Responding to DDoS attacks for additional tips and recommendations on how to optimize your Cloudflare configurations.

If you are only using Magic Transit or Spectrum but also operate HTTP applications that are not behind Cloudflare, it is recommended to onboard them to Cloudflare’s WAF/CDN service to benefit from L7 protection.

We protect entire corporate networks, help customers build Internet-scale applications efficiently, accelerate any website or Internet application, ward off DDoS attacks, keep hackers at bay, and can help you on your journey to Zero Trust.

Visit 1.1.1.1 from any device to get started with our free app that makes your Internet faster and safer.

To learn more about our mission to help build a better Internet, start here. If you’re looking for a new career direction, check out our open positions.

Source:

https://blog.cloudflare.com/mantis-botnet/

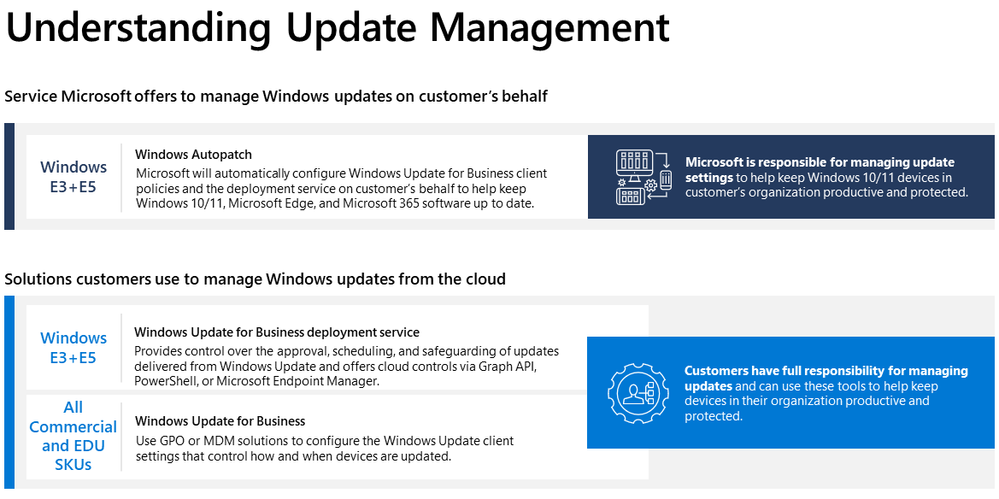

Windows Autopatch is a service that uses the Windows Update for Business solutions on your behalf.

Windows Autopatch is a service that uses the Windows Update for Business solutions on your behalf.