By: Alifiya Sadikali – Trendmicro

August 09, 2023

Read time: 4 min (1179 words)

Discover the core principles and frameworks of Zero Trust, NIST 800-207 guidelines, and best practices when implementing CISA’s Zero Trust Maturity Model.

With the growing number of devices connected to the internet, traditional security measures are no longer enough to keep your digital assets safe. To protect your organization from digital threats, it’s crucial to establish strong security protocols and take proactive measures to stay vigilant.

What is Zero Trust?

Zero Trust is a cybersecurity philosophy based on the premise that threats can arise internally and externally. With Zero Trust, no user, system, or service should automatically be trusted, regardless of its location within or outside the network. Providing an added layer of security to protect sensitive data and applications, Zero Trust only grants access to authenticated and authorized users and devices. And in the event of a data breach, compartmentalizing access to individual resources limits potential damage.

Your organization should consider Zero Trust as a proactive security strategy to protect its data and assets better.

The pillars of Zero Trust

At its core, the basis for Zero Trust is comprised of a few fundamental principles:

- Verify explicitly. Only grant access once the user or device has been explicitly authenticated and verified. By doing so, you can ensure that only those with a legitimate need to access your organization’s resources can do so.

- Least privilege access. Only give users access to the resources they need to do their job and nothing more. Limiting access in this way prevents unauthorized access to your organization’s data and applications.

- Assume breach. Act as if a compromise to your organization’s security has occurred. Take steps to minimize the damage, including monitoring for unusual activity, limiting access to sensitive data, and ensuring that backups are up-to-date and secure.

- Microsegmentation. Divide your organization’s network into smaller, more manageable segments and apply security controls to each segment individually. This reduces the risk of a breach spreading from one part of your network to another.

- Security automation. Use tools and technologies to automate the process of monitoring, detecting, and responding to security threats. This ensures that your organization’s security is always up-to-date and can react quickly to new threats and vulnerabilities.

A Zero Trust approach is a proactive and effective way to protect your organization’s data and assets from cyber-attacks and data breaches. By following these core principles, your organization can minimize the risk of unauthorized access, reduce the impact of a breach, and ensure that your organization’s security is always up-to-date and effective.

The role of NIST 800-207 in Zero Trust

NIST 800-207 is a cybersecurity framework developed by the National Institute of Standards and Technology. It provides guidelines and best practices for organizations to manage and mitigate cybersecurity risks.

Designed to be flexible and adaptable for a variety of organizations and industries, the framework supports the customization of cybersecurity plans to meet their specific needs. Its implementation can help organizations improve their cybersecurity posture and protect against cyber threats.

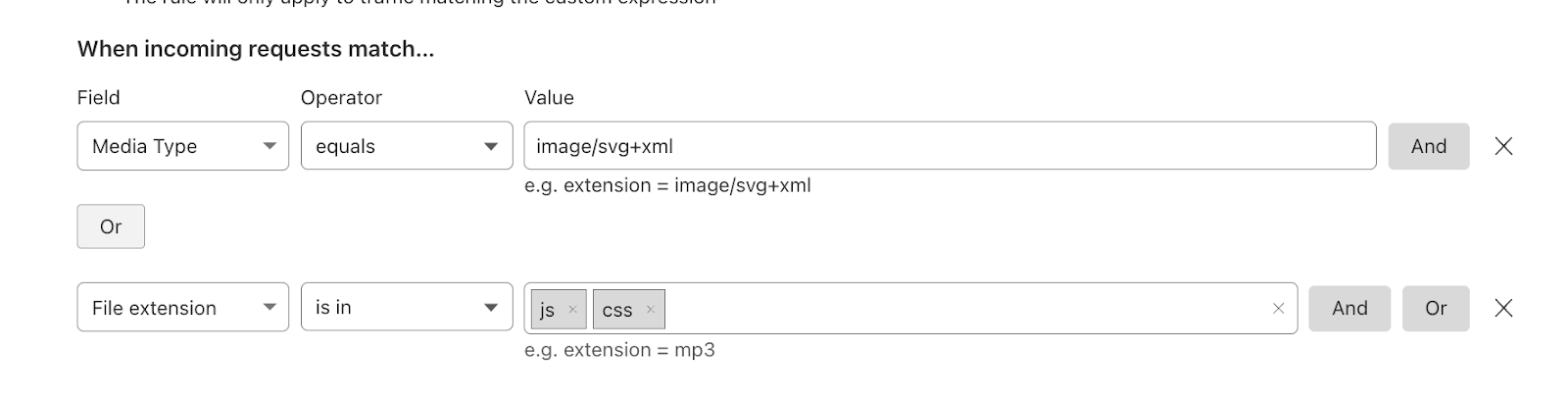

One of the most important recommendations of NIST 800-207 is to establish a policy engine, policy administrator, and policy enforcement point. This will help ensure consistent policy enforcement and that access is granted only to those who need it.

Another critical recommendation is conducting continuous monitoring and having real-time risk-based decision-making capabilities. This can help you quickly identify and respond to potential threats.

Additionally, it is essential to understand and map dependencies among assets and resources. This will help you ensure your security measures are appropriately targeted based on potential vulnerabilities.

Finally, NIST recommends replacing traditional paradigms, such as implicit trust in assets or entities, with a “trust but verify” methodology. Adopting this approach can better protect your organization’s assets and resources from internal and external threats.

CISA’s Zero Trust Maturity Model

The Zero Trust Maturity Model (ZMM), developed by CISA, provides a comprehensive framework for assessing an organization’s Zero Trust posture. This model covers critical areas including:

- Identity management: To implement a Zero Trust strategy, it is important to begin with identity. This involves continuously verifying, authenticating, and authorizing any entity before granting access to corporate resources. To achieve this, comprehensive visibility is necessary.

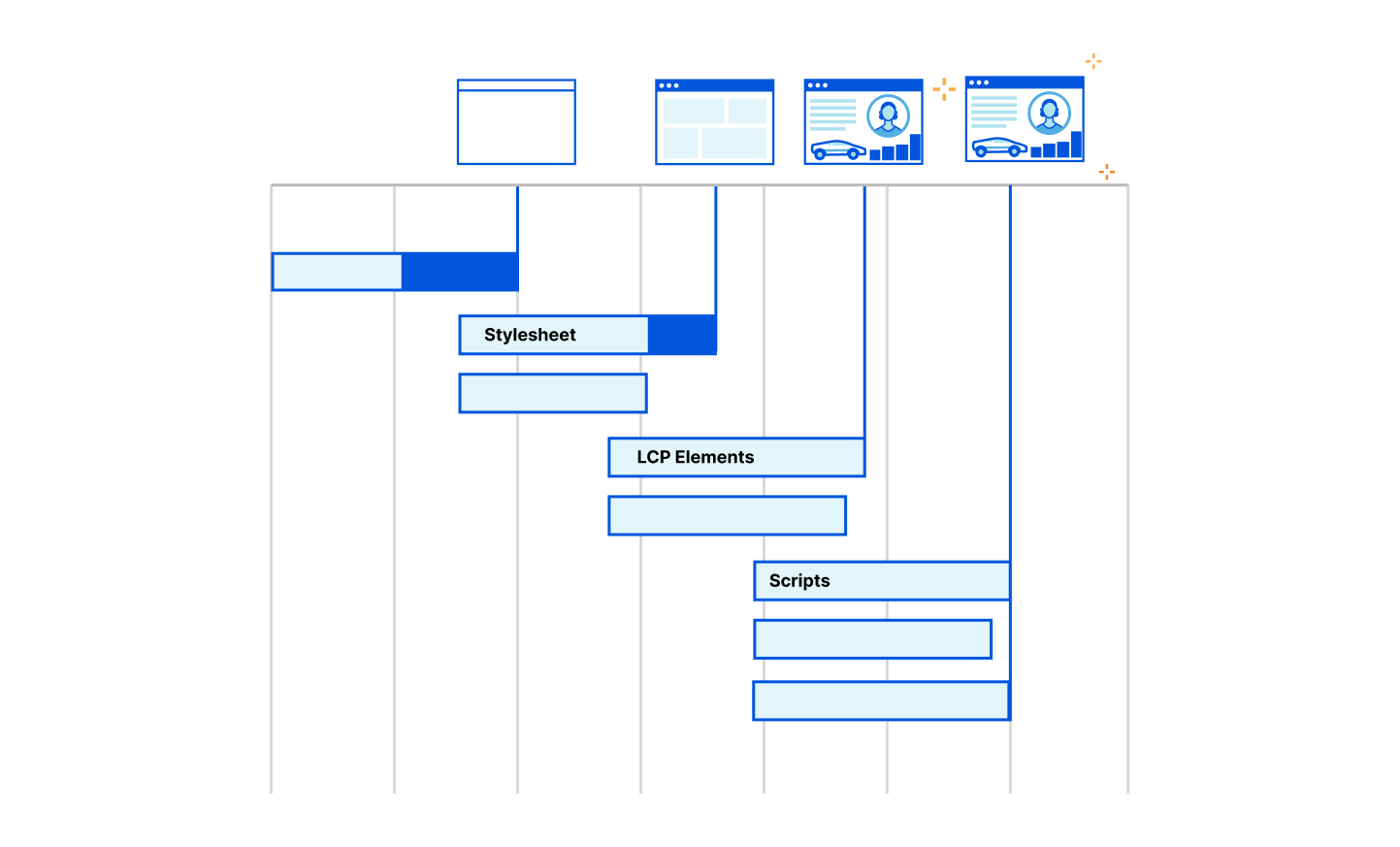

- Devices, networks, applications: To maintain Zero Trust, use endpoint detection and response capabilities to detect threats and keep track of device assets, network connections, application configurations, and vulnerabilities. Continuously assess and score device security posture and implement risk-informed authentication protocols to ensure only trusted devices, networks and applications can access sensitive data and enterprise systems.

- Data and governance: To maximize security, implement prevention, detection, and response measures for identity, devices, networks, IoT, and cloud. Monitor legacy protocols and device encryption status. Apply Data Loss Prevention and access control policies based on risk profiles.

- Visibility and analytics: Zero Trust strategies cannot succeed within silos. By collecting data from various sources within an organization, organizations can gain a complete view of all entities and resources. This data can be analyzed through threat intelligence, generating reliable and contextualized alerts. By tracking broader incidents connected to the same root cause, organizations can make informed policy decisions and take appropriate response actions.

- Automation and orchestration: To effectively automate security responses, it is important to have access to comprehensive data that can inform the orchestration of systems and manage permissions. This includes identifying the types of data being protected and the entities that are accessing it. By doing so, it ensures that there is proper oversight and security throughout the development process of functions, products, and services.

By thoroughly evaluating these areas, your organization can identify potential vulnerabilities in its security measures and take prompt action to improve your overall cybersecurity posture. CISA’s ZMM offers a holistic approach to security that will enable your organization to remain vigilant against potential threats.

Implementing Zero Trust with Trend Vision One

Trend Vision One seamlessly integrates with third-party partner ecosystems and aligns to industry frameworks and best practices, including NIST and CISA, offering coverage from prevention to extended detection and response across all pillars of zero trust.

Trend Vision One is an innovative solution that empowers organizations to identify their vulnerabilities, monitor potential threats, and evaluate risks in real-time, enabling them to make informed decisions regarding access control. With its open platform approach, Trend enables seamless integration with third-party partner ecosystems, including IAM, Vulnerability Management, Firewall, BAS, and SIEM/SOAR vendors, providing a comprehensive and unified source of truth for risk assessment within your current security framework. Additionally, Trend Vision One is interoperable with SWG, CASB, and ZTNA and includes Attack Surface Management and XDR, all within a single console.

Conclusion

CISOs today understand that the journey towards achieving Zero Trust is a gradual process that requires careful planning, step-by-step implementation, and a shift in mindset towards proactive security and cyber risk management. By understanding the core principles of Zero Trust and utilizing the guidelines provided by NIST and CISA to operationalize Zero Trust with Trend Vision One, you can ensure that your organization’s cybersecurity measures are strong and can adapt to the constantly changing threat landscape.

To read more thought leadership and research about Zero Trust, click here.

Source :

https://www.trendmicro.com/en_us/research/23/h/industry-zero-trust-frameworks.html